Assured autonomy: What, from where, how, and what next?

What is assured autonomy?

We need to define “assured autonomy” at the outset as there is not yet a commonly accepted definition. Autonomous systems comprise multiple, networked computing elements that can perform complex real-world functions, such as decision-making, without the necessity of human involvement. These components include sensor devices, edge devices, and server class devices. The network speeds may vary widely, and the network may even be unavailable, forcing the components to operate in disrupted, disconnected, intermittent, and low-bandwidth (DDIL) environments. These components collaborate to achieve autonomous or semi-autonomous functions, such as robotic navigation and decision support. Such components are distributed either locally or over a broader region.

What current technology provides building blocks for assured autonomy?

Assured autonomy builds on several foundational pillars, each of which is an active area of inquiry today:

- Adversarial machine learning (ML): This involves the study of how to make machine learning algorithms (typically individual algorithms) resilient to manipulation (of the data or the model) by adversaries.

- Secure distributed protocols: This refers to the design of theoretically sound and secure distributed protocols that can tolerate attacks and, despite that, provide acceptable guarantees as long as the attacks are bounded in number or intensity. These protocols typically operate in deterministic settings and not in data-driven settings.

- Reinforcement learning (RL): This AI approach trains agents through positive and negative rewards, primarily for planning and control of autonomous systems. Recently, RL has started demonstrating its ability to realistically represent and learn unstructured, highly complex environments with sufficient generalizability, adaptability, and computational efficiency. However, it is still a challenge to guarantee the safety and reliability of these methods.

- Tiny ML: This represents the approach of using compressed ML models on static mobile or embedded platforms at the edge, primarily for multi-modal scene perception of autonomous systems. Distributed processing at the edge on multi-modal sensor data is overcoming the limitations of conventional centralized learning approaches (such as network overload). These edge computing models can be subjected to adversarial attacks and other security challenges.

- Control theory: Control theory offers a useful reference point for assured autonomy. Like the aims of assured autonomy, techniques of robust control and adaptive control keep the system within bounds of expected trajectories when parameters of the system (and its environment) are uncertain, disturbances occur, and parameters vary over time.

What are you doing in the Purdue Army Artificial Intelligence Innovation Institute (A2I2) project?

Let’s discuss at a high level the activities we are pursuing in the Purdue A2I2 (August 2020–2025; the project also involves a subcontract with Princeton University), which falls within the ARL’s broader Army Artificial Intelligence Innovation Institute (A2I2).

- Developing secure mission planning algorithms through RL: Because RL is an experience-driven technique, it struggles to deal with situations for which it has no prior knowledge. For applications such as mission planning, outputs of the RL algorithms could be potential targets for adversarial kinetic and non-kinetic effects. Creating RL solutions that account for the threat of adversarial attacks and deception activities is crucial, since they will be the key aspects of future Army Multi-Domain Operations. We are developing secure RL algorithms within A2I2.

- Developing secure decentralized learning algorithms that can tolerate model or data poisoning: In this thrust, we are developing algorithms that can execute on the autonomous components and be tolerant to adversaries that either poison the data (at training or at inference time) or poison the model. We have considered adversaries that can be strategically placed, for example, at the server, at the intermediate nodes, or at the sensor nodes. These adversaries can collude among themselves. Our defense algorithms leverage various advantages, as would be expected in operational settings, such as a priori knowledge of the learning process or correlation between the cyber and the physical signals (which would be violated during an attack).

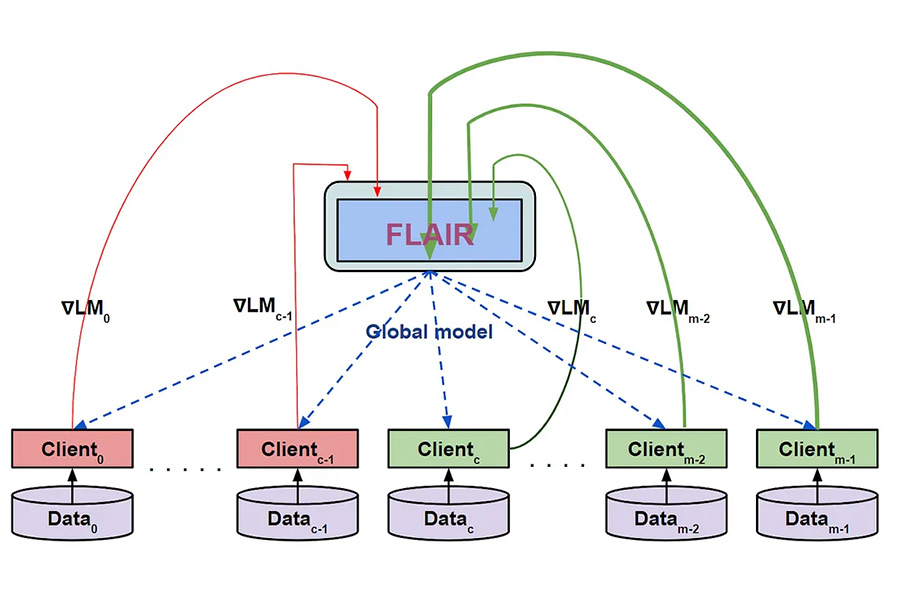

- Understanding the privacy risks for local data when using federated learning (FL): FL is a widely popular structure that allows one to learn an ML model collaboratively. The classical structure of FL is that there are multiple clients, each with its own local data, which it possibly would like to keep private. There is a server that is responsible for learning a global ML model using gradient updates from all the clients and then sharing that global model with all the clients. In this line of inquiry, we are first understanding the privacy risks that still exist despite the use of FL. We have shown, for example, that even with secure aggregation, it is possible for a server to leak private data of the clients. We are concurrently developing defense techniques, for instance, having the clients cooperate and verify the models that they are receiving from the server. We also are laying out a new approach to learning, between the extremes of FL and peer-to-peer learning — a hierarchical learning approach that is mapped from the hierarchical nature of the different classes of devices in our autonomous systems.

- Secure compression of streaming ML algorithms to mobile and embedded devices: We are compressing the ML algorithms that perform inference on streaming data (such as streaming video or audio) so they can fit on terrestrial robots or drone swarms. Uniquely, their compressed versions still can provide probabilistic guarantees on accuracy and latency. We have unveiled key techniques for performing such compression in a content-aware manner (for example, a complex frame in the stream gets more resources) and in a contention-aware manner (the device may not be fully dedicated to run only this streaming algorithm).

What does the future hold?

Our gaze into the future is framed by two questions:

- How will assured autonomy shape the future environment?

- How should assured autonomy evolve over time?

The technological environment of our civilization is clearly trending toward ever-greater roles for autonomous systems. In every sphere of human activity — everyday life, industry, government, military, and others — intelligent physical robots and intelligent virtual decision-supporting tools are emerging as highly capable and indispensable. Our world becomes more reliant on autonomy, and therefore increasingly vulnerable to the anomalous, unintended behaviors of autonomous systems.

We also see that autonomous systems operate increasingly closer to the edge, in other words, in physical and cyber proximity to end users and farther away from centralized data and control centers. As such, systems become less reliant on centralized control, monitoring, and management.

As they move closer to the edge, intelligent autonomous entities will have increased peer-to-peer communications and collaboration and be increasingly self-organized and self-managed. This will further erode their dependence on centralized control and increase the likelihood of emergent collective behaviors of autonomous systems. Some of these emergent behaviors may be undesirable and dangerous.

Besides the naturally occurring objectionable emergent behaviors, the behaviors of hostile actors will continue to present a significant threat. History has not been kind to hopes of a kinder, gentler cyber world. Undoubtedly, the future world of ubiquitous autonomous systems will be threatened by both unintentionally emerging behaviors and intentionally hostile actors. Large-scale propagation and catastrophic collapse cannot be excluded in such a densely connected and collaborating society of autonomous systems.

What future work needs to be done?

We will need to provide guarantees for autonomous systems’ operation under uncertainty, which may include uncertain failures and unknown attacks.

In line with our predictions, assured autonomy as a field must bear greater fruits in the years to come. It needs to provide a design blueprint for components, at different levels of autonomy and resource-richness, to operate together in a secure manner. Then, there needs to be composability in these techniques so that from components, we can ascend to assured autonomous systems. And then, such a design blueprint should be realizable in software and hardware without a gargantuan effort. This points to the desired property that implementations be standardized and plug-and-play.

Further, these components need to have an added dimension of autonomy — not just autonomy in functions, but also autonomy in non-functional properties, such as security and reliability. This means the components must be able to autonomously (to differing degrees) reason about their security and reliability, and take measures when these properties are violated.

In summary, in this article we have defined the term “assured autonomy” and described the pillars on which it is built. We then outlined our current activities in the Purdue A2I2 project. Finally, we hypothesized about our expectations for the future and the role assured autonomy is likely to play in it.

Saurabh Bagchi, PhD

Professor, Elmore Family School of Electrical and Computer Engineering

Director, Army Research Laboratory-funded Assured Autonomy Innovation Institute (A212) project at Purdue

Founding Director, Purdue Center for Resilient Infrastructures, Systems, and Processes (CRISP)

Priya Narayanan, PhD

Chief, Autonomous Systems Division, DEVCOM Army Research Laboratory

Alexander Kott, PhD

Chief Scientist and Senior Research Scientist-Cyber Resiliency, DEVCOM Army Research Laboratory

Source: Assured autonomy: What, from where, how, and what next?