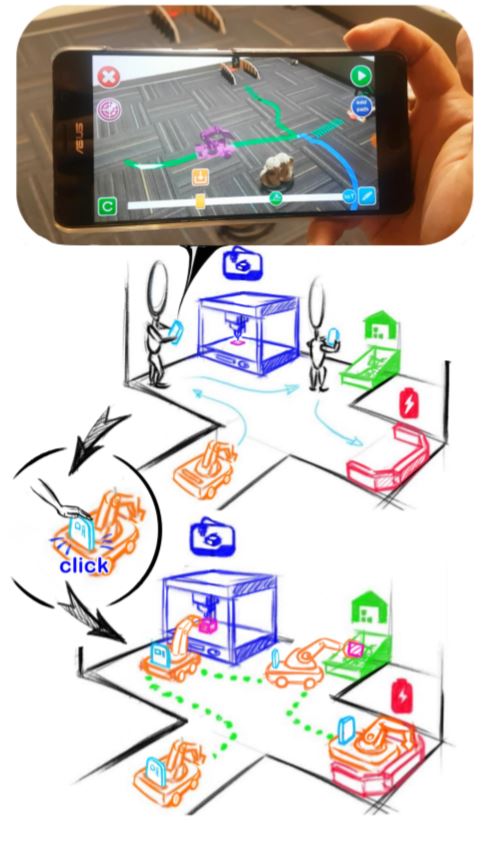

We present V.Ra, a visual and spatial programming system for robot-IoT task authoring. In V.Ra, programmable mobile robots serve as binding agents to link the stationary IoTs and perform collaborative tasks. We establish an ecosystem that coherently connects the three key elements of robot task planning (human-robot-IoT) with one single AR-SLAM device. Users can perform task authoring in an analogous manner with the Augmented Reality (AR) interface. Then placing the device onto the mobile robot directly transfers the task plan in a what-you-do-is-what-robot-does (WYDWRD) manner. The mobile device mediates the interactions between the user, robot and IoT oriented tasks, and guides the path planning execution with the SLAM capability.

V.Ra: An In-Situ Visual Authoring System for Robot-IoT Task Planning with Augmented Reality

Authors: Yuanzhi Cao, Zhuangying Xu, Fan Li, Wentao Zhong, Ke Huo, and Karthik Ramani

Extended Abstracts of the 2019 CHI Conference on Human Factors in Computing Systems, LBW0151:1–LBW0151:6. https://doi.org/10.1145/3290607.3312797

https://doi.org/10.1145/3322276.3322278

Convergence Design Lab Admin

Administrator of the Convergence Design Lab website.