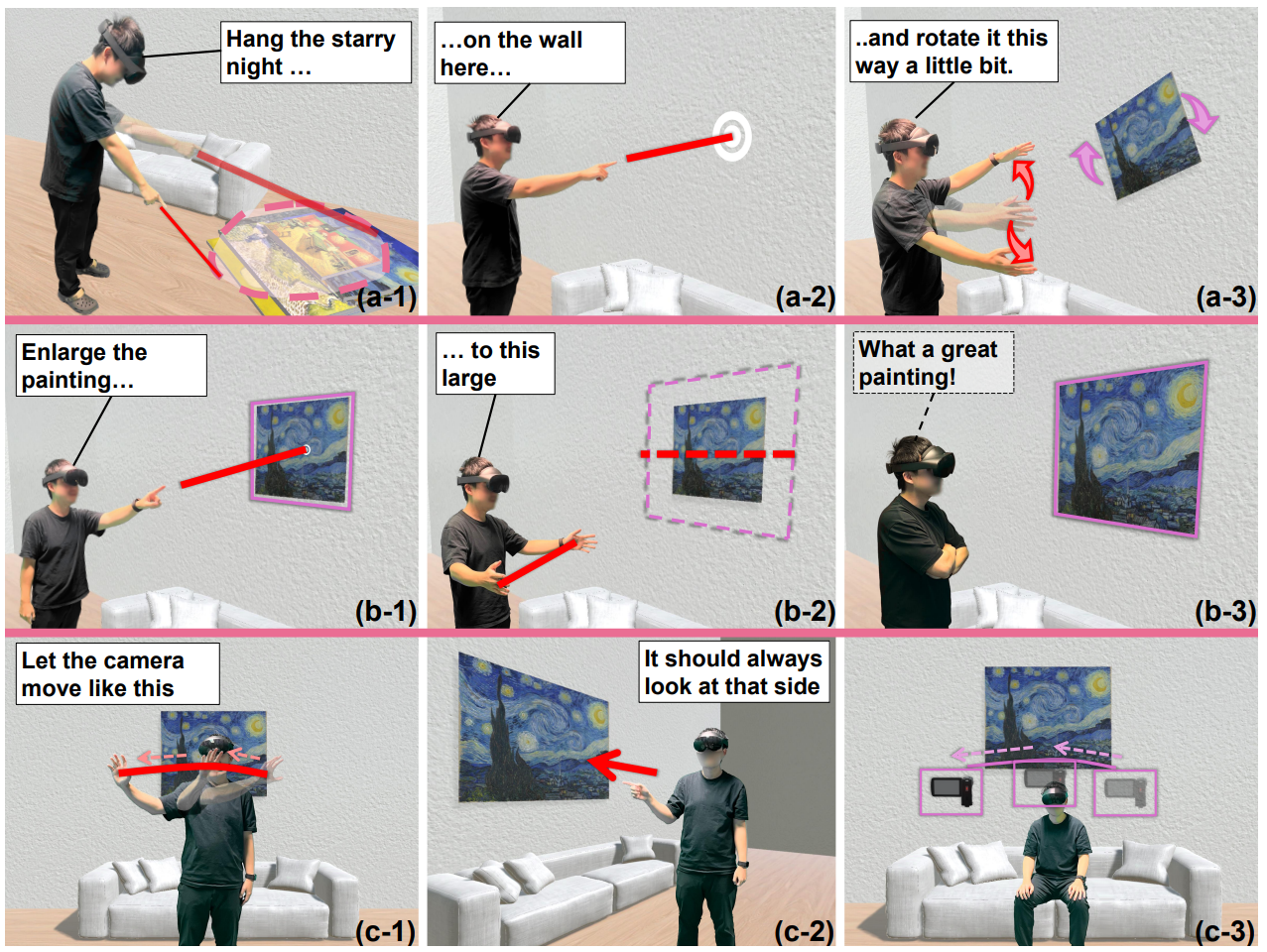

Large Language Model (LLM)-based copilots have shown great potential in Extended Reality (XR) applications. However, the user faces challenges when describing the 3D environments to the copilots due to the complexity of conveying spatial-temporal information through text or speech alone. To address this, we introduce GesPrompt, a multimodal XR interface that combines co-speech gestures with speech, allowing end-users to communicate more naturally and accurately with LLM-based copilots in XR environments. By incorporating gestures, GesPrompt extracts spatial-temporal reference from co-speech gestures, reducing the need for precise textual prompts and minimizing cognitive load for end-users. Our contributions include (1) a workflow to integrate gesture and speech input in the XR environment, (2) a prototype VR system that implements the workflow, and (3) a user study demonstrating its effectiveness in improving user communication in VR environments.

GesPrompt: Leveraging Co-Speech Gestures to Augment LLM-Based Interaction in Virtual Reality

Authors: Xiyun Hu, Dizhi Ma, Fengming He, Zhengzhe Zhu, Shao-Kang Hsia, Chenfei Zhu, Ziyi Liu, Karthik Ramani

In Proceedings of the 2025 ACM Designing Interactive Systems Conference

https://doi.org/10.1145/3715336.3735769

Xiyun Hu

PhD student in Mechanical Engineering, Robotics area. Focusing on human-computer iteration in AR/VR/MR/XR and mechatronics.