Seeing through your mentor’s own eyes

AR is a leap forward from a telestrator, where a remote mentor annotates a live video of the mentee’s operating field using lines and icons that encode surgical instructions. These annotations are visualized by the local mentee on a nearby display. Though effective, telestration requires mentees to constantly shift focus away from the operating field to the display and remap the instructions to the actual operating field, which can lead to cognitive overload and errors.

AR is a leap forward from a telestrator, where a remote mentor annotates a live video of the mentee’s operating field using lines and icons that encode surgical instructions. These annotations are visualized by the local mentee on a nearby display. Though effective, telestration requires mentees to constantly shift focus away from the operating field to the display and remap the instructions to the actual operating field, which can lead to cognitive overload and errors.

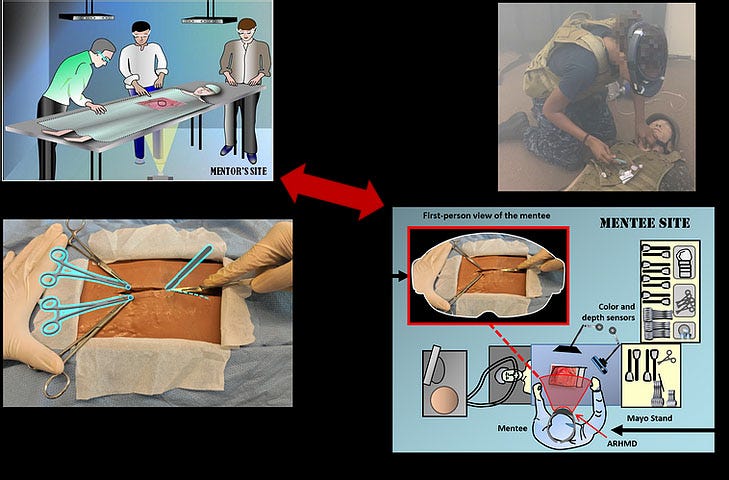

In AR telementoring, computer-generated 3D objects are superimposed onto the mentee’s field of view in real time. Most AR-based systems place tablets in a fixed position in the operating field between the patient and the mentee to display the medical guidance. These platforms are neither self-contained nor portable, and require multiple pieces of hardware, such as external cameras, screens, computers, and brackets.

In a project led by Industrial Engineering at Purdue and developed with support from the U.S. Department of Defense, our next-generation System for Telementoring with Augmented Reality (STAR) platform addresses these shortcomings by integrating an Augmented Reality Head-Mounted Display (ARHMD). An onboard camera transmits an image-stabilized view of the operating field to the mentor. The mentor creates annotations representing surgical instructions using a touch interface to draw incision lines, illustrate the placement of instruments, and so forth.

The head-mounted displays enable the medical instructions to be perceived at the correct position and depth relative to the patient’s body. They allow mentees to see the surgical field directly and don’t obstruct their hands, as the device is entirely self-contained on the head.

We validated the platform by having surgery residents perform fasciotomies (cutting through tissue to release tension from a muscle) on cadavers, and by having U.S. Navy medics perform cricothyroidotomies (incision through the neck to obtain an airway) on patient simulators. Follow-on evaluations by experts concluded that participants receiving guidance with STAR had better surgical outcomes, performed the procedures faster and with fewer errors, and had greater confidence due to visual feedback and AR surgical instructions.

We validated the platform by having surgery residents perform fasciotomies (cutting through tissue to release tension from a muscle) on cadavers, and by having U.S. Navy medics perform cricothyroidotomies (incision through the neck to obtain an airway) on patient simulators. Follow-on evaluations by experts concluded that participants receiving guidance with STAR had better surgical outcomes, performed the procedures faster and with fewer errors, and had greater confidence due to visual feedback and AR surgical instructions.We’re now adding an artificial intelligence (AI) module to provide practitioners autonomous guidance when mentors are not available, for example, due to a loss of network connection. The idea is to use machine vision to acquire a view of the operating field and then send the imagery to a deep learning neural network that has been trained to generate text descriptions representing surgical instructions. These instructions then will be conveyed to the surgeon via images, text, speech and videos.

In the military, prompt and adequate treatment is crucial for the survival of critically injured combatants. In healthcare in general, every practitioner, particularly those in rural regions, should also have access to some sort of telementoring and telemedicine technology.

Mentoring gets its name from Mentor –whom Odysseus appointed to instruct his son, Telemachus, when Odysseus left home in Ithaca to sail to Troy. The mentoring process has come a long way since then, and we believe this platform can have a significant impact on surgical results.

Edgar J. Rojas Muñoz

PhD Candidate, School of Industrial Engineering

College of Engineering, Purdue University

Professor of Industrial Engineering

Professor of Biomedical Engineering (by courtesy)

Regenstrief Center for Healthcare Engineering

College of Engineering, Purdue University

Adjunct Professor of Surgery, Indiana University School of Medicine

Cagri Savran, PhD

Professor of Mechanical Engineering

Biomedical Engineering and

Electrical and Computer Engineering (by courtesy)

Anirudh Tunga

Graduate Industrial Engineering Student

Related Links