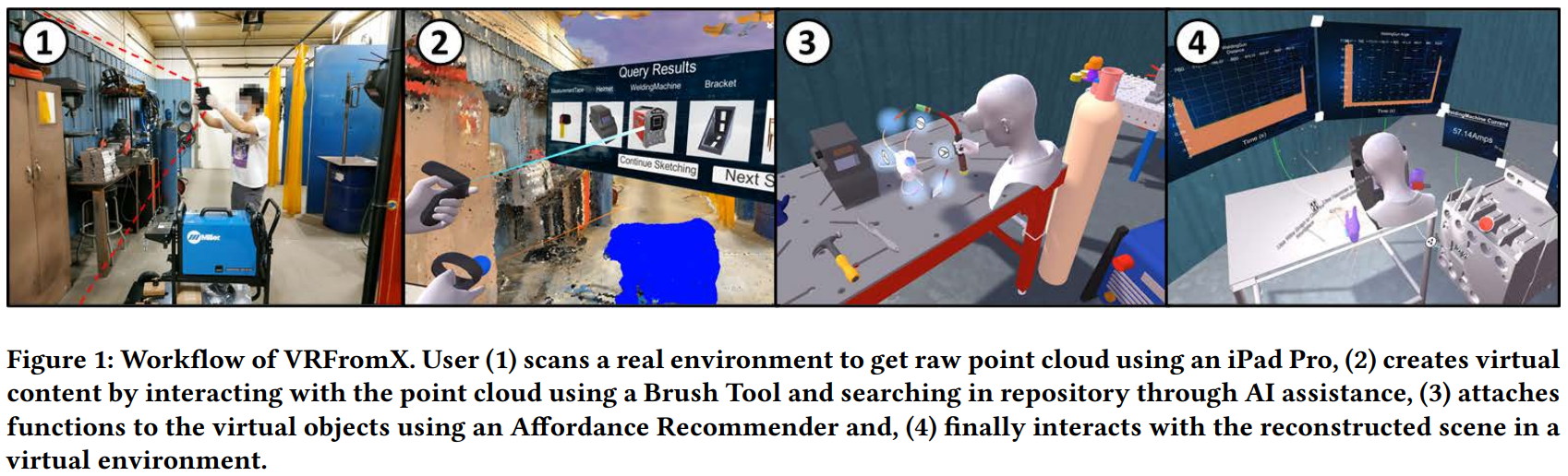

There is an increasing trend of Virtual-Reality (VR) applications found in education, entertainment, and industry. Many of them utilize real world tools, environments, and interactions as bases for creation. However, creating such applications is tedious, fragmented, and involves expertise in authoring VR using programming and 3D-modelling softwares. This hinders VR adoption by decoupling subject matter experts from the actual process of authoring while increasing cost and time. We present VRFromX, an in-situ Do-It-Yourself (DIY) platform for content creation in VR that allows users to create interactive virtual experiences. Using our system, users can select region(s) of interest (ROI) in scanned point cloud or sketch in mid-air using a brush tool to retrieve virtual models and then attach behavioral properties to them. We ran an exploratory study to evaluate usability of VRFromX and the results demonstrate feasibility of the framework as an authoring tool. Finally, we implemented three possible use-cases to showcase potential applications.

VRFromX: From Scanned Reality to Interactive Virtual Experience with Human-in-the-Loop

Authors: Ananya Ipsita, Hao Li, Runlin Duan, Yuanzhi Cao, Subramanian Chidambaram, Min Liu, Karthik Ramani

In Extended Abstracts of the 2021 CHI Conference on Human Factors in Computing Systems

https://doi.org/10.1145/3411763.3451747

Ananya Ipsita

Ananya Ipsita is a Master's student in the School of Mechanical Engineering at Purdue University since Fall 2018. She received her Bachelor's degree in Electronics and Communication Engineering from National Institute of Technology, Rourkela, India. Prior to joining Purdue, she worked as a software engineer in SAP Labs, India where she designed and developed analytical business solutions. Her research interest includes computer vision, robotic systems, Augmented Reality (AR) and Human-Computer Interaction (HCI).