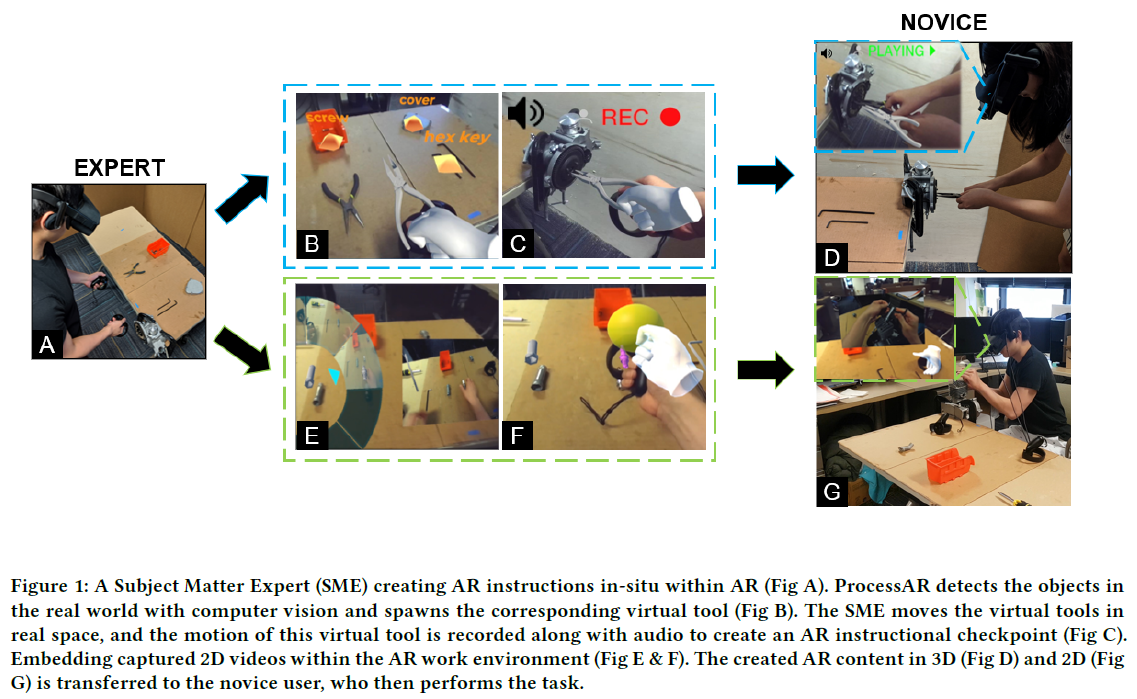

Augmented reality (AR) is an efficient form of delivering spatial information and has great potential for training workers. However, AR is still not widely used for such scenarios due to the technical skills and expertise required to create interactive AR content. We developed ProcessAR, an AR-based system to develop 2D/3D content that captures subject matter expert’s (SMEs) environment object interactions in situ. The design space for ProcessAR was identified from formative interviews with AR programming experts and SMEs, alongside a comparative design study with SMEs and novice users. To enable smooth workflows, ProcessAR locates and identifies different tools/objects through computer vision within the workspace when the author looks at them. We explored additional features such as embedding 2D videos with detected objects and user-adaptive triggers. A final user evaluation comparing ProcessAR and a baseline AR authoring environment showed that, according to our qualitative questionnaire, users preferred ProcessAR.

ProcessAR: An augmented reality-based tool to create in-situ procedural 2D/3D AR Instructions

Authors: Subramanian Chidambaram, Hank Huang, Fengming He, Xun Qian, Ana M Villanueva, Thomas S Redick, Wolfgang Stuerzlinger, Karthik Ramani

In Proceedings of the Designing Interactive Systems Conference

https://dl.acm.org/doi/10.1145/3461778.3462126

Subramanian Chidambaram

Subramanian Chidambaram is a Ph.D. student in the School of Mechanical Engineering at Purdue University. Before joining the C Design lab, he obtained his Master’s from the School of Aeronautics and Astronautics with a Minor in computational science and engineering from Purdue and a Bachelor’s degree in Mechanical engineering from Vellore Institute of Technology, India. His current research interest involves exploring Human-Computer Interactions and Code-less Digital interface development for Authoring Augmented Reality (AR) and Virtual Reality (VR) content, Collaboration with AR/VR, and instructional design for skill transfer using immersive reality and 3D User Interface. In addition, he has co-authored papers on tangible interfaces. In the past, he has also researched developing geometric modeling tools that guide novices to design, analyze and fabricate functional load-bearing structures.

Personal Website

Personal Website