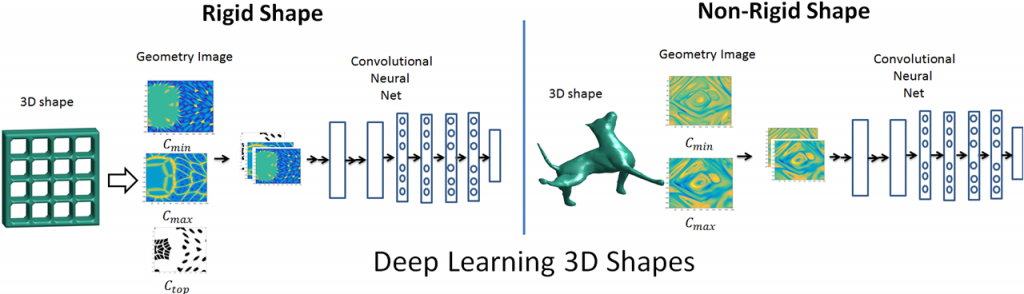

Surfaces serve as a natural parametrization to 3D shapes. Learning surfaces using convolutional neural networks (CNNs) is a challenging task. Current paradigms to tackle this challenge are to either adapt the convolutional filters to operate on surfaces, learn spectral descriptors defined by the Laplace-Beltrami operator, or to drop surfaces altogether in lieu of voxelized inputs. Here we adopt an approach of converting the 3D shape into a ‘geometry image’ so that standard CNNs can directly be used to learn 3D shapes. We qualitatively and quantitatively validate that creating geometry images using authalic parametrization on a spherical domain is suitable for robust learning of 3D shape surfaces. This spherically parameterized shape is then projected and cut to convert the original 3D shape into a flat and regular geometry image. We propose a way to implicitly learn the topology and structure of 3D shapes using geometry images encoded with suitable features. We show the efficacy of our approach to learn 3D shape surfaces for classification and retrieval tasks on non-rigid and rigid shape datasets.

Deep Learning 3D Shape Surfaces using Geometry Images

Authors: Ayan SInha, Jing Bai, Karthik Ramani

The 14th European Conference on Computer Vision, October 11-14, 2016, Amsterdam, The Netherlands.

https://openreview.net/forum?id=ByZCoqWObr

Convergence Design Lab Admin

Administrator of the Convergence Design Lab website.