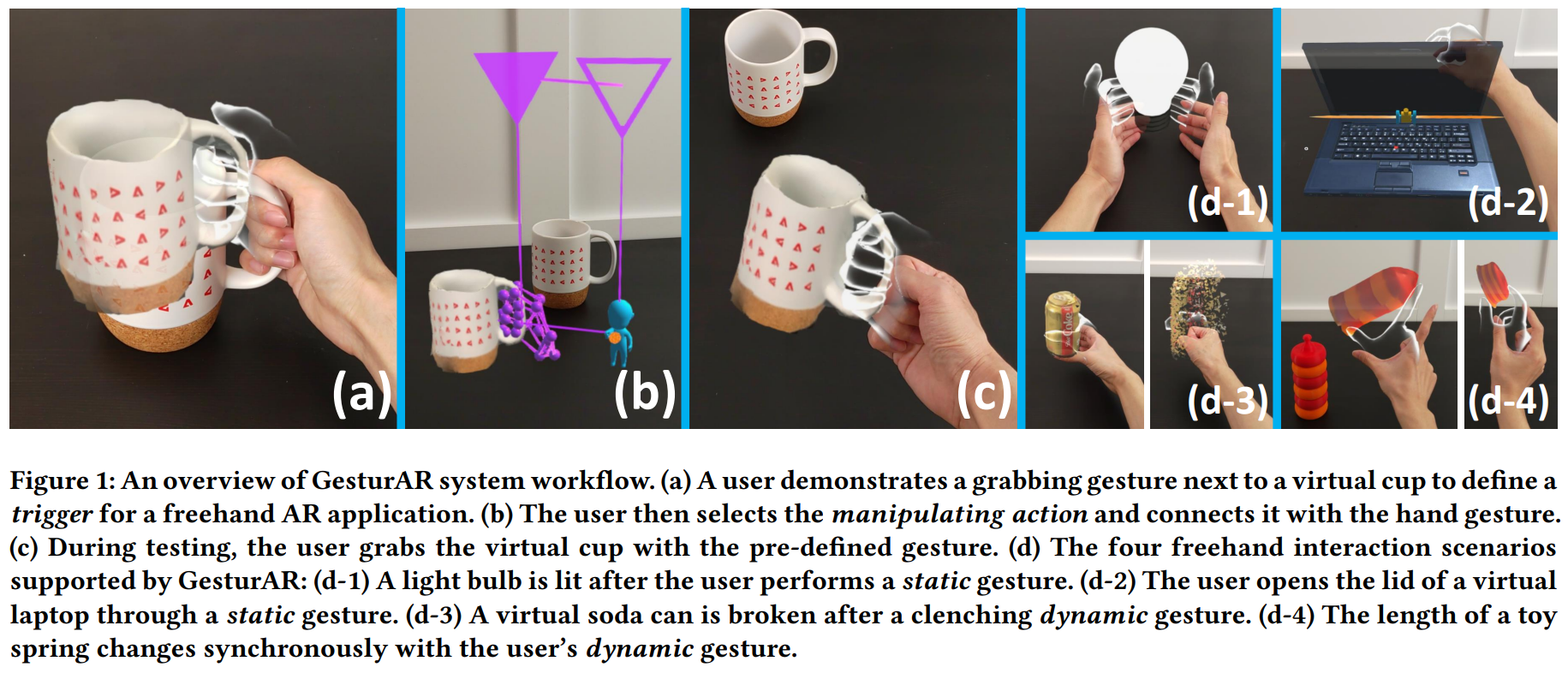

Freehand gesture is an essential input modality for modern Augmented Reality (AR) user experiences. However, developing AR applications with customized hand interactions remains a challenge for end-users. Therefore, we propose GesturAR, an end-to-end authoring tool that supports users to create in-situ freehand AR applications through embodied demonstration and visual programming. During authoring, users can intuitively demonstrate the customized gesture inputs while referring to the spatial and temporal context. Based on the taxonomy of gestures in AR, we proposed a hand interaction model which maps the gesture inputs to the reactions of the AR contents. Thus, users can author comprehensive freehand applications using trigger-action visual programming and instantly experience the results in AR. Further, we demonstrate multiple application scenarios enabled by GesturAR, such as interactive virtual objects, robots, and avatars, room-level interactive AR spaces, embodied AR presentations, etc. Finally, we evaluate the performance and usability of GesturAR through a user study.

GesturAR: An Authoring System for Creating Freehand Interactive Augmented Reality Applications

Authors: Tianyi Wang, Xun Qian, Fengming He, Xiyun Hu, Yuanzhi Cao, Karthik Ramani

In The 34th Annual ACM Symposium on User Interface Software and Technology (UIST '21)

https://doi.org/10.1145/3472749.3474769

Tianyi Wang

Tianyi Wang is a Ph.D. student in the School of Mechanical Engineering at Purdue University. Before joining the C Design Lab, Tianyi received his bachelor's degree from the Department of Precision Instrument in Tsinghua University, Beijing in 2016. Tianyi's current research interests focus on utilizing the technology of robotics, augmented reality as well as deep learning in the area of Human-Computer Interaction.