STAR sight for surgical guidance

STAR sight for surgical guidance

| Author: | Eric Bender |

|---|---|

| Magazine Section: | Change The World |

| College or School: | CoE |

| Article Type: | Issue Feature |

| Feature Intro: | Juan Pablo Wachs rethinks telementoring to offer more help in remote operating rooms. |

Imagine you’re a surgeon working in a combat zone or a rural clinic, facing a patient in trauma who immediately needs a tricky operation you’ve never performed. Then imagine you could call on a remote surgical expert — and get “hands-on” guidance as good as the expert could provide if he or she were in your operating room.

That’s the vision of Juan Pablo Wachs, associate professor of industrial engineering, whose research focuses on how human–machine interaction can help improve surgical outcomes.

In the STAR (System for Telementoring with Augmented Reality) project, funded by the Office of the Assistant Secretary of Defense for Health Affairs, his goal is to provide visual systems that are so well integrated and feature such clear “augmented reality” guidance that the surgeon and the remote mentor can indeed feel as if they are next to each other.

Traditional surgical telementoring, which draws on relatively primitive computer graphics, is far from reaching that goal. These systems combine audio with a “telestrator” video system that displays the ongoing operation overlaid with simple graphic annotations drawn by the remote mentor. But the surgeon must continually shift his or her focus to the telestrator, which is off to one side of the operating table, and then try to translate its sketches to perform exactly the right procedures in exactly the right site on the patient.

“We’ve come up with technology that allows you to align directly in your view both the anatomy of the patient and the annotations of the mentor, eliminating the shift of focus from the patient,” Wachs says. “You are always looking at the surgical region. Therefore you eliminate delays, and the operations are much more accurate because you don’t need to realign the notations. At the same time, this is a very portable system that you can either wear on your eyes or take with you in a tablet, rather than relying on a telestrator that may not be available.”

Unlike telestrators, the STAR equipment also allows the mentor to convey a rich repertoire of gestures (showing where to place a specific instrument, for instance) and to act out complex procedures. “The goal is to make it a more natural way to communicate,” says Maria Eugenia Cabrera, a Purdue industrial engineering doctoral student working on gestural interactions.

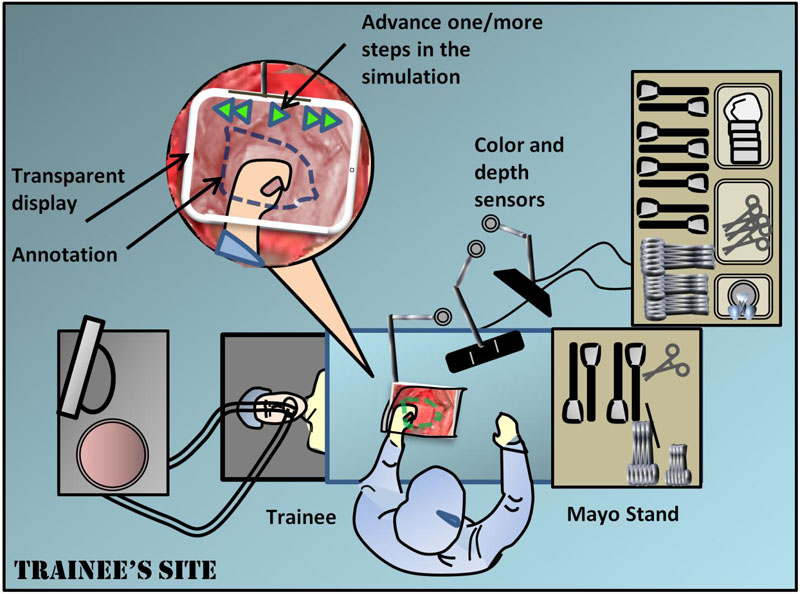

A second-generation STAR system uses two tablet PCs, one for the trainee surgeon and one for the mentor. On the trainee side, the tablet is held directly in the field of view of the operating field on the patient. The tablet employs multiple cameras and infrared depth sensing to give an almost transparent “window” onto the patient, and the window includes visual annotations from the mentor. This window is replicated on the mentor’s tablet, so that the mentor sees almost exactly what the trainee sees.

Wachs and his team compared this STAR approach to a telestrator method in simulated surgery with 20 Purdue pre-medical or medical students. The students received two tasks to perform on a dummy patient, one to locate sites for surgical port placements and the other to make an abdominal incision. Students using the STAR system achieved much smaller placement errors than did students using a telestrator, although they also took slightly longer, says Daniel Andersen, a Purdue computer science doctoral student working on the project and lead author of a paper about the study published in Surgery.

Improving transparency and gesture recognition

Voicu Popescu, associate professor of computer science, who develops advanced imaging systems, works with Wachs and Andersen to address the tricky problems in making the next-generation STAR telementoring more truly transparent to surgeons on both ends.

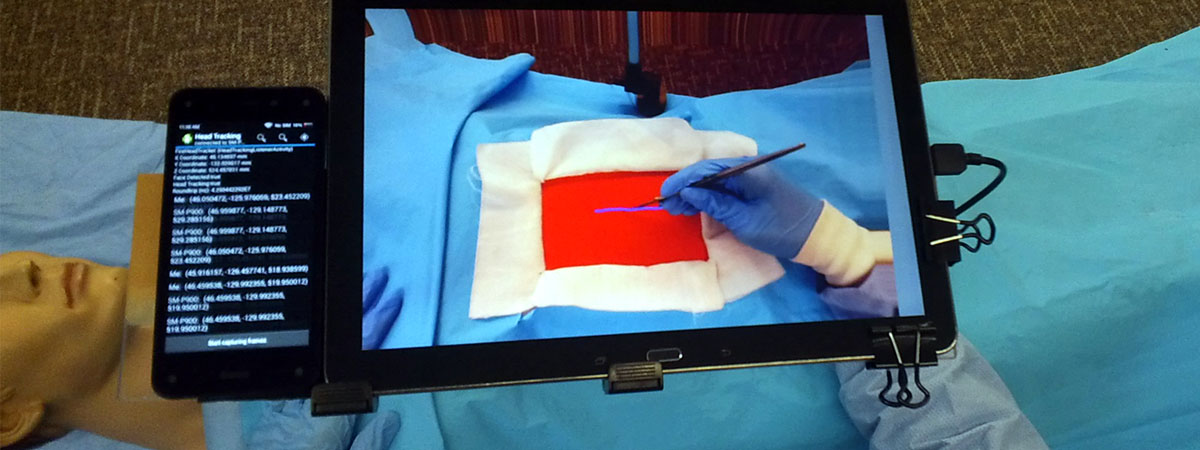

One challenge in making the tablet “disappear” is that it must change its display as the trainee surgeon’s head position changes. The current tablet prototype uses four front-facing cameras to triangulate the user’s head position, as well as an infrared sensor to capture the three-dimensional geometry of the operating field.

The Purdue researchers also are looking at eyewear as a potentially more elegant alternative to tablets. “We experimented with Google Glass, but the results were not very promising, because it’s not truly augmented reality,” Wachs says. Among the problems with the Google eyewear, the mentor’s annotations appear on a small screen in the corner of the trainee’s vision rather than overlaid over the trainee’s view of the surgical area.

Another major goal for the team is to build a better repertoire of nonverbal communications. “How surgeons use hand movements or gestures to interact and to teach in the operating room is an issue that fascinates me,” Wachs says. “We also have the opportunity to explore how this physical instruction can be conveyed remotely. We know very well how to convey speech remotely and how to convey images, but there’s not a lot of research about how we can convey physical instruction.”

“We’re fairly good at two-dimensional communications now,” says Brian Mullis, M.D., Chief of Orthopaedic Trauma Service and an associate professor of orthopaedic surgery at the Indiana University School of Medicine and collaborator in the STAR project. “That’s the easy part. The problem is that surgery is three-dimensional not two-dimensional, so how do you convey depth to the person on the other side?”

Wachs, Cabrera and their colleagues partnered with Mullis, chief trauma surgeon Gerardo Gomez, clinical nurse Sherri Marley and other staff at Eskenazi Hospital in Indianapolis to observe surgeons as they simulated telementoring for a certain limb-saving procedure. “They could use surgical instruments, or hand movements or touch,” Wachs says. “Once we understand all these functional interactions, we can find ways to convey that in a realistic manner.”

Additionally, the STAR team aims to replace the mentor’s tablet with a life-size virtual operation table, somewhat like a huge iPad tablet. “We will actually project the image of the virtual patient on a large interactive surface with a size similar to the patient bed, so other surgeons can be co-located around this virtual patient at the same time,” Wachs says. “They can work together, conveying their actionable knowledge through gestures.”

“We want to adapt the system to the mentor, not the other way around,” adds Cabrera. One ambitious goal is “one-shot learning” for gestures, in which the surgeon could make an air or hand gesture and explain its meaning, and the system then would learn how to characterize the gesture, translate it and display it to trainees in future sessions.

Saving lives in the field

If the STAR approach can be proven in studies on animals and cadavers, and then in clinical trials, Wachs foresees many significant contributions to healthcare, especially in locations far from major medical centers.

For example, advanced telementoring could be crucial to help a patient who urgently needs a complex procedure for which a rural hospital lacks a specialist.

STAR-style telementoring also holds high promise for boosting medical care on the battlefield, says Mullis, a former military combat surgeon.

One application would be to aid corpsmen and medics who may be under live fire or in a helicopter bringing wounded back to a surgical facility, and who may have relatively little surgical training, he says.

"Front-line medical staff could keep iPad-like telementoring tablets in their rucksacks, which would offer a foundational tool to really help deliver immediate care to our injured warriors in the field,” Mullis suggests.

Additionally, Mullis predicts such telementoring could play a large role in broadening the skills of surgeons who are relatively new to combat operations. “We could help junior surgeons who have limited trauma experience and are trying to save our soldiers’ lives.”

Comments