Research Areas:

Current Research

Humanoid Research

| Ladder Climbing Motion Generation & Control for Humanoid Robots | |

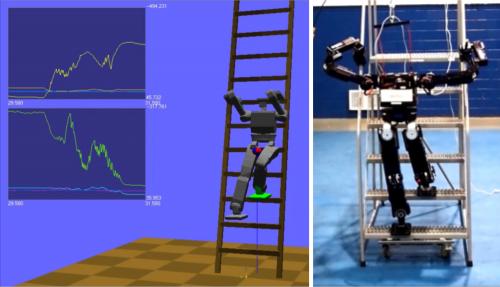

| Objectives In this project, we have developed a framework on ladder-climbing control for humanoid robots. In collaboration with Indiana University, we participated in DRC-Hubo team (Track A) at DRC trial (Dec 2013 , Miami) funded by DARPA. The ladder-climbing control was proposed to model after stair-climbing minimizing the use of gripping force for climbing allowing us to use existing humanoid robots to perform ladder climbing tasks. Indiana University developed motion planning framework & software that can handle coliision avoidance better and Purdue developed algorithms and dynamics computations and control based on whole-body model of humanoid robots. Publications 1. "Motion Planning of Ladder Climbing for Humanoid Robots" Y. Zhang, J. Luo, K. Hauser, R. Ellenberg, P. Oh, H. A. Park, M. Paldhe, and C.S.G. Lee, In proceedings of IEEE Conf. on Technologies for Practical Robot Applications (TePRA), April 2013 2. "Motion Planning of Ladder Climbing for Humanoid Robots" Jingru Luo, Yajia Zhang, Kris Hauser, H. Andy Park, Manas Paldhe, C. S. George Lee, Michael Grey, Mike Stilman, Jun Ho Oh, Jungho Lee, Inhyeok Kim, and Paul Oh, To be presented to IEEE Conf. on Robotics and Automation (ICRA 2014), May 2014 Videos |

| Posture-based Control Concept | |

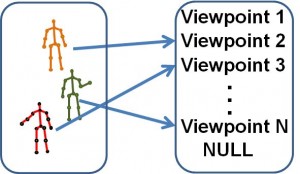

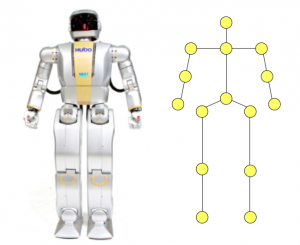

| Objectives In this ongoing research, posture-based control concept is proposed for humanoid robots which are modeled as 14 body segments connected by 15 points. A posture is defined by the 3D positions of these 15 points. Applications: Human-human interaction, falls, action/activity recognition, etc. |

| Open-source Humanoid Robot Control Software Package | |

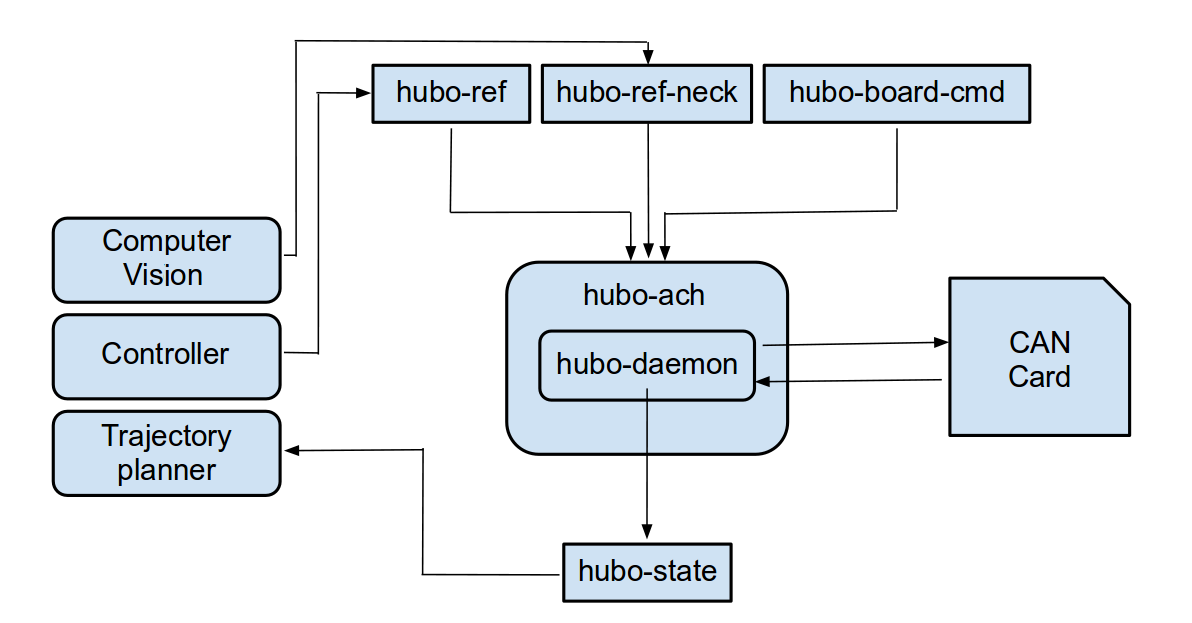

| Objectives We have been developing open-source software packages for controlling a humanoid robot. The packages use architecture similar to that of Robot Operating System (ROS), but are more suitable for controlling humanoid robots. Some of these software packages were used during the DARPA Robotics Challenge. Application The software packages can be used for performing various tasks like ladder-climbing, valve-turing, etc. |

| Whole-body Balancing for Humanoid Robots | |

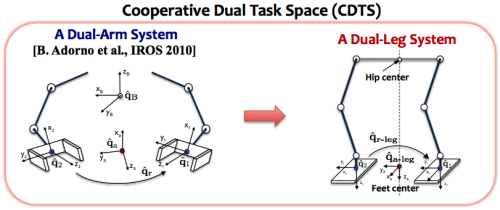

| Objectives The goal of this project was to develop a general framework that enables us to generate a library of balanced whole-body motions by merging upper-body motions transferred from human with the lower-body motions generated by existing walking pattern planners. From our observation on human movements where balancing is achieved by coordinated-leg-movements, we have applied cooperative-dual-task-space representation used for coordinated motion tasks of two arm systems to describe and control the coordination between the legs. This representation gave us a nice decoupling between variables regarding feet constraints and waist position, allowing us adjust the balance only maintaining the feet constraints. The proposed method enabled us to achieve balance in a unified manner for static and dynamic lower-body movements which have been handled very differently in other methods. Publications: "Cooperative-Dual-Task-Space-based Whole-body Motion Balancing for Humanoid Robots" H. Andy Park and C.S. George Lee In proceedings of IEEE Conf. on on Robotics and Automation (ICRA 2013), May 2013 Videos |

| Uneven Walking Pattern Generation for Humanoid Robots | |

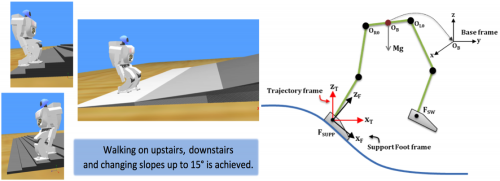

| Objectives In this project, our goal was to generalize an existing walking pattern planners for uneven terrain environment such as slopes and stairs. In a modification to Convolution-Sum-based walking pattern generator, we removed jerkiness of generated Center-of-Mass (CoM) motion by using a low-pass-filter, and generated an additional ankle joint movement and vertical CoM movement adapting to the uneven terrain environment. The proposed method was successfully validated for Hoap-2 robot in Webots. Publications: "Convolution-Sum-Based Generation of Walking Patterns for Uneven Terrains" H. Andy Park, Muhammad A. Ali and C.S. George Lee In proceedings of IEEE-RAS Conf. on Humanoid Robots (Humanoid 2010), Dec. 2010 |

| Closed-Form Inverse Kinematic Joint Position Solutions for Humanoid Robots | |

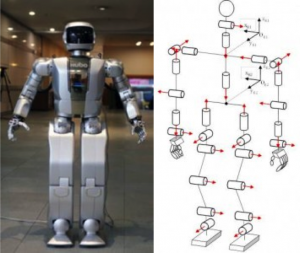

| Objectives In this project, we aimed to obtain closed-form joint position solutions for most existing humanoid platforms -- Hubo KHR-4, ASIMO, HRP-2, and HOAP-2. We have developed a novel "Reversed-Decoupling" approach that enables us to solve for closed-form position solutions in upper/lower limbs with 6-DoFs at most. Publications: 1. “Closed-Form Inverse Kinematic Joint Solution for Humanoid Robots” H. Andy Park, Muhammad A. Ali and C.S. George Lee The International Journal of Humanoid Robotics, Vol. 9, No. 3 (2012) 2. “Closed-Form Inverse Kinematic Joint Solution for Humanoid Robots” Muhammad A. Ali, H. Andy Park and C.S. George Lee In proceedings of IEEE/RSJ Intl. Conf. on Intelligent Robots and Systems (IROS), October 2010 |

3D Point Cloud for Human-Pose Estimation

Multiple lane detection using a novel edge similarity metric and Bezier curves

| Objectives Detect multiple lane markings both on straight and curved roads for following applications.

| |

| KITTI Dataset from Karlsruhe Institute of Technology (KIT) Total Frames - 355 False Positives - 22 (6.19%) Detection Rate for Lane 1 (Immediate lane markers) - 98.31% Detection Rate for Lane 2 - 98.31% Detection Rate for Lane 3 - 93.8% | Videos |

| Suburban Bridge Dataset from University of Auckland Total Frames - 850 False Positives - 293 (34.47%) Detection Rate for Lane 1 (Immediate lane markers) - 93.76% Detection Rate for Lane 2 - 93.17% | |

| Suburban Trailer Follow from University of Auckland Total Frames - 700 False Positives - 43 (6.14%) Detection Rate for Lane 1 (Immediate lane markers) - 92.71% Detection Rate for Lane 2 - 99.85% |